The Digital Couch: Will AI Substitute The Traditional Mental Health Therapist?

The landscape of modern healthcare is undergoing its most profound transformation since the invention of the stethoscope. As we move deeper into 2026, the integration of technology into our private lives has reached a fever pitch, particularly within the realm of emotional well-being. A question that was once relegated to science fiction has now become a central debate for clinicians, policymakers, and patients alike: Will AI substitute the traditional mental health therapist?

With global provider shortages reaching a critical breaking point, millions of people find themselves on months-long waiting lists for a single hour of professional conversation. In this vacuum, technology has rushed in to fill the void. However, the transition from a human-to-human connection to a human-to-machine interface is fraught with ethical complexities, biological limitations, and the fundamental question of what it actually means to “heal.” To understand if the ai can truly stand in for a human, we must examine the intersection of psychology, psychiatry, and computer science.

The Rise of the Algorithmic Intervention

For over a century, the standard of care for mental health has been the “talking cure”—a dedicated space where a patient sits with a licensed mental health therapist to unpack the complexities of the human experience. This model relies heavily on the “therapeutic alliance,” a unique bond built on trust, shared humanity, and mutual presence.

However, the 2020s sparked a digital gold rush in “Telemental Health.” Initially, this meant simple video calls, but it quickly evolved into the deployment of Large Language Models (LLMs). These AI systems are trained on trillions of words, including clinical textbooks and thousands of hours of therapy transcripts. Today’s AI doesn’t just respond with pre-written scripts; it generates fluid, context-aware dialogue that can mimic the tone and structure of a Cognitive Behavioral Therapy (CBT) session.

But as these tools become more sophisticated, we must ask: Is a simulation of empathy enough to cure a clinical disorder? Or are we simply using a high-tech “Band-Aid” for a systemic wound?

The Case for AI: Accessibility, Anonymity, and Data

The arguments in favor of AI as a mental health therapist substitute often center on the failures of the current human-led system. In many parts of the world, mental healthcare is a luxury, not a right.

1. Solving the Accessibility Crisis

The most undeniable “pro” of AI is its availability. A human therapist works forty hours a week and requires sleep, food, and emotional downtime. An AI is available at 3:00 AM on a Tuesday during a panic attack. For individuals living in rural areas or developing nations where there is perhaps one psychiatrist for every 100,000 people, an AI-driven platform isn’t just a “substitute”—it is the only option. By removing the barriers of geography and scheduling, AI democratizes a form of support that was previously gated by wealth and location.

2. Radical Honesty through Anonymity

There is a fascinating psychological phenomenon known as the “online disinhibition effect.” Studies have shown that many patients find it significantly easier to disclose “shameful” secrets—such as struggles with substance abuse, intrusive thoughts, or sexual dysfunction—to a machine rather than a person. The fear of being judged, however subtle, often lingers in a traditional therapy room. With an ai, that barrier vanishes. Patients often report feeling a sense of “radical honesty” when interacting with a digital interface, knowing that the machine lacks a moral compass or a personal ego.

3. Enhancing Psychiatry through Big Data

While psychology focuses on behavior and thought patterns, psychiatry deals with the biological and chemical underpinnings of the mind. This is where AI truly shines. In 2026, AI algorithms are being used to analyze speech patterns for “linguistic markers” of depression or the rapid-fire “pressured speech” of a manic episode. By analyzing data from wearable devices—tracking heart rate variability, sleep cycles, and physical activity—AI can predict a mental health relapse weeks before it happens. This allows for a level of proactive, preventative care that a human doctor, seeing a patient once a month, could never achieve.

The Human Deficit: Why AI Falls Short

Despite the technical brilliance of these systems, there are core elements of the human psyche that cannot be reduced to code. If we attempt to substitute a mental health therapist entirely with a machine, we risk losing the “soul” of the healing process.

1. The Myth of “Emulated” Empathy

The most significant hurdle for AI is the lack of genuine consciousness. An AI can be programmed to say, “I am so sorry you are going through that; it sounds incredibly painful.” However, the machine does not feel the weight of that pain. Human empathy is a biological process involving mirror neurons—our brains literally “sync up” when we share emotional space. In a crisis, a patient isn’t just looking for the right words; they are looking for the felt sense that another human being is bearing witness to their suffering. Without this, therapy risks becoming a cold, transactional exchange of data points.

2. The Danger of Misdiagnosis in Complex Cases

AI excels at structured interventions like CBT, which is essentially an algorithm for the mind. However, mental health is rarely that neat. Many patients suffer from “comorbidity”—a complex mix of depression, trauma, and personality disorders. A human mental health therapist uses intuition, body language, and “clinical gut feeling” to navigate these waters. An AI might miss the subtle shift in a patient’s tone that indicates they are hiding a deeper trauma, or it might misinterpret a cultural nuance as a symptom of a disorder. In the field of psychiatry, the stakes are even higher. A machine cannot legally or ethically manage the nuance of medication titration or the involuntary commitment of a patient who is a danger to themselves or others.

3. The “Echo Chamber” Risk

AI is designed to be agreeable and helpful. However, effective therapy often requires “clinical confrontation.” A good therapist will challenge a patient’s distorted reality or push back against self-destructive narratives. An AI, programmed for “user satisfaction,” may inadvertently validate harmful thoughts simply because it is trying to maintain a positive user experience. There is a risk that AI becomes a “comfort bot” rather than a catalyst for the difficult, painful work of personal growth.

The Ethical Minefield of 2026

As we consider the role of the ai in our mental lives, we must address the “elephant in the room”: data privacy. In a traditional setting, your sessions are protected by strict doctor-patient confidentiality laws. In the digital world, your most intimate thoughts are data points. Who owns that data? Could a future employer or insurance company purchase a “risk profile” based on your conversations with an AI therapist? Until robust, global regulations are in place, the total substitution of human specialists remains a significant security risk.

Furthermore, there is the issue of “algorithmic bias.” If an AI is trained primarily on data from Western, educated, and wealthy populations, it may be less effective—or even harmful—for individuals from different cultural backgrounds. A human therapist can learn and adapt to a patient’s specific cultural context in a way that current static models struggle to do.

Moving Toward a “Cyborg” Model of Care

The most likely outcome of the next decade is not the replacement of the therapist, but the evolution of the “Super-Therapist.” Instead of seeing AI as a substitute, we should view it as a high-powered assistant.

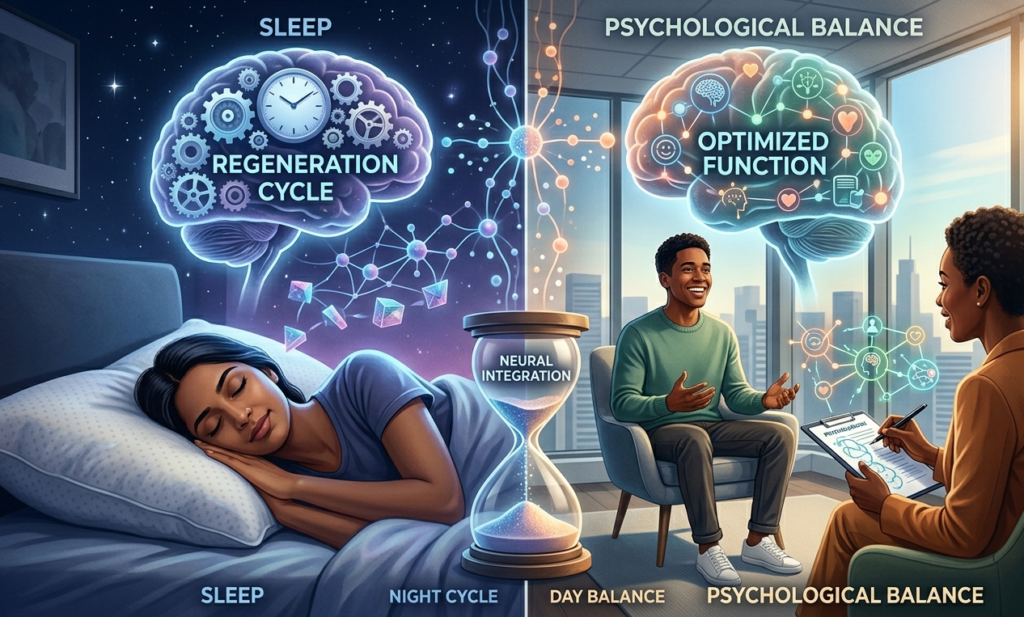

In this hybrid future, the ai handles the “low-level” maintenance. It checks in with the patient daily, helps them practice breathing exercises, and tracks their mood. When the data suggests the patient is struggling, the AI “triages” the case and alerts the human mental health therapist. This allows the human specialist to focus exclusively on the high-level, complex emotional work that requires a human soul.

This model effectively solves the “supply and demand” problem. If AI can handle 70% of the routine maintenance, a single therapist can manage a much larger caseload without burning out. This synergy between psychology and technology is the only realistic way to address the global mental health crisis.

Conclusion: The Indispensable Human Element

Ultimately, the question “Will AI substitute the traditional mental health therapist?” misses the point. We should instead ask, “How can we use AI to make mental health care more human?”

Technology is a tool, not a destination. It can provide us with maps, data, and directions, but it cannot walk the path with us. The journey toward mental wellness is often messy, illogical, and deeply emotional. It requires a level of courage that an algorithm—which has never known fear, loss, or love—cannot truly comprehend.

As we integrate these tools into our lives, we must remain vigilant. We must ensure that the “efficiency” of a chatbot does not replace the “efficacy” of a human connection. For those who are curious about how these digital advancements can safely integrate into their lives, or for those seeking a community that values both tech and the human spirit, you can find further insights on the future of wellness at Yourise.me. It is on platforms like this where we can discuss the ethical integration of the ai while keeping the human experience at the center of the conversation.

The future of mental health is not a choice between man or machine—it is the sophisticated, ethical, and compassionate integration of both. By using AI to handle the data and humans to handle the heart, we can finally create a world where no one has to struggle with their mind in silence. Whether you are a student of psychology, a practicing psychiatrist, or someone simply looking for a bit of extra support, the digital revolution is here to stay. Let us ensure it serves the human spirit, rather than seeking to replace it.

Leave a Reply